Spreadsheets are powerful.

But they are also fragile.

When I first worked on an ISO 27001-aligned risk register, it looked structured and complete. Assets were listed. Threats were documented. Likelihood and impact were scored. Controls were mapped to Annex A.

Everything seemed organised.

But something important was missing.

Consistency.

That’s when I decided to automate the scoring model using Python.

Not to replace governance but to strengthen it.

The Problem With Manual Risk Scoring

Risk registers often rely on manual scoring:

- Likelihood is rated by judgement.

- Impact is estimated.

- Final risk scores are calculated in Excel.

- Categories are assigned manually.

Even with good intentions, this introduces:

- Inconsistency between assessors

- Scoring bias

- Fatigue errors

- Difficulty prioritising at scale

Governance works best when it is defensible and repeatable.

Automation helps achieve that.

The Model: How the Risk Scoring Worked

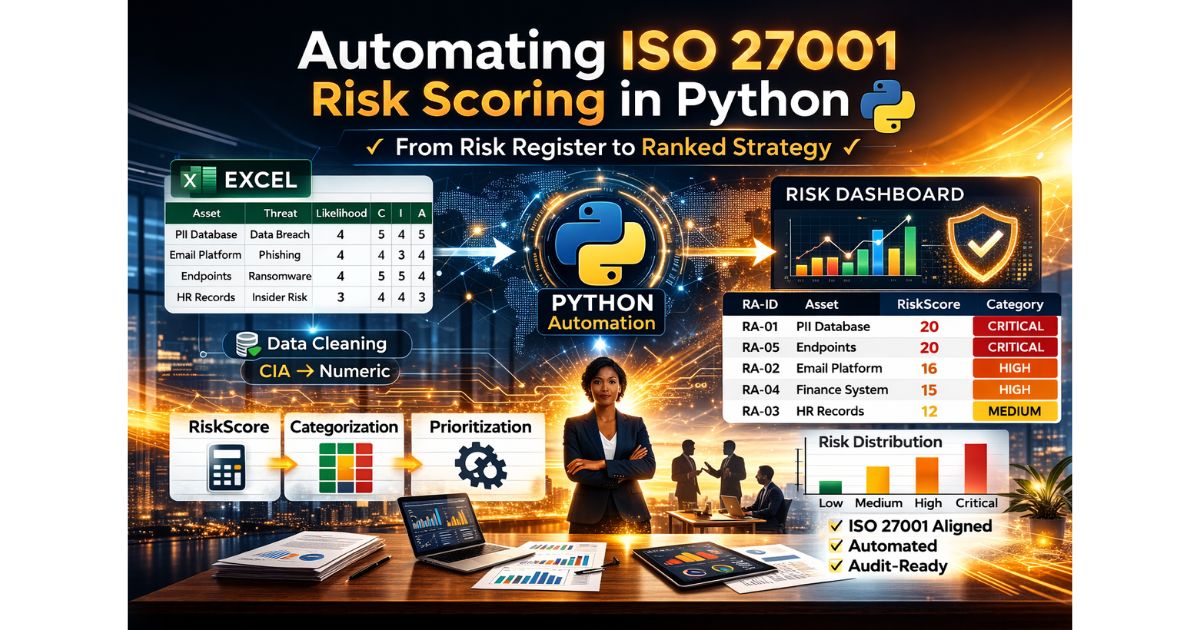

The goal was simple:

Take a structured ISO 27001 risk register and build a consistent, automated scoring engine.

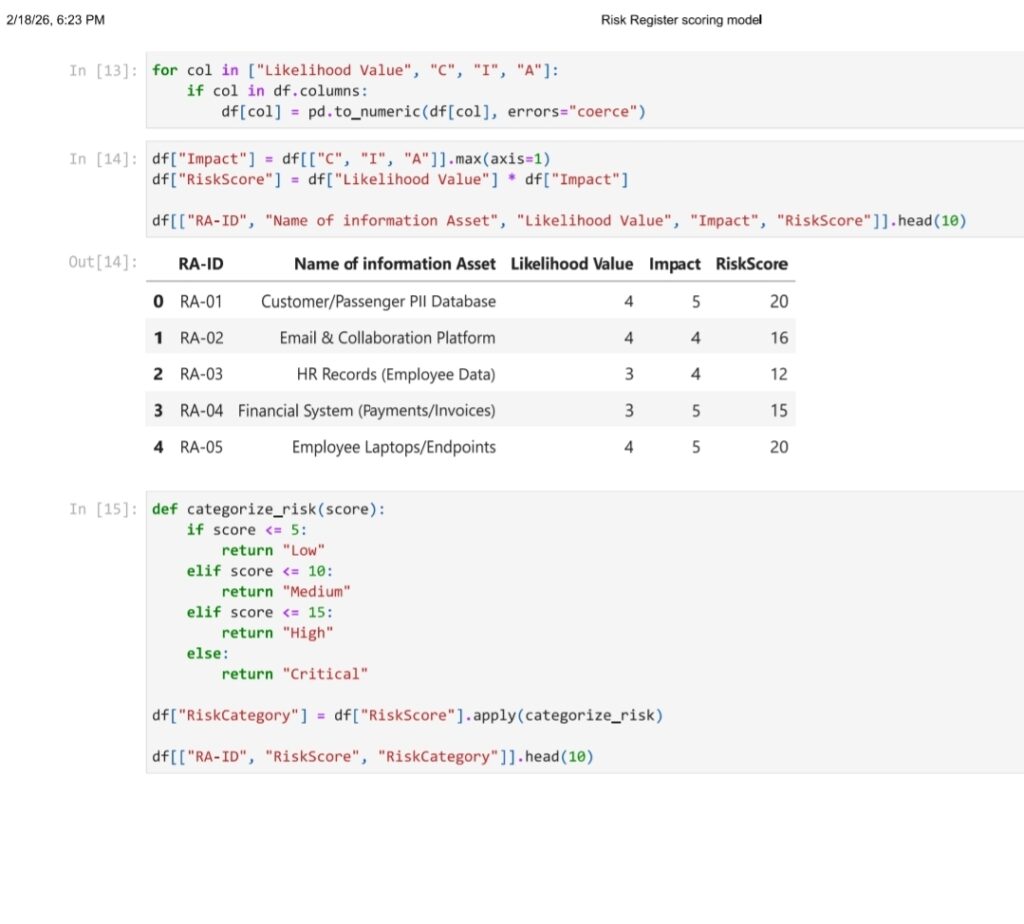

The Python-based model:

- Loaded the risk register directly from Excel

- Cleaned and structured the dataset

- Converted qualitative CIA values (Confidentiality, Integrity, Availability) into numeric form

- Calculated Impact using the highest CIA value

- Computed RiskScore = Likelihood × Impact

- Categorized risks into Low, Medium, High, and Critical

- Sorted risks to produce a ranked output

Instead of manually scanning rows, the model produced a prioritised risk list instantly.

What changed was not just speed it was clarity.

Why Impact Was Calculated Using the Worst-Case CIA Value

In ISO 27001 risk assessments, impact is often linked to Confidentiality, Integrity, and Availability.

Rather than averaging these values, I calculated impact using the maximum CIA score.

Why?

Because a severe impact in any one dimension can materially affect the business.

For example:

- A breach of customer PII severely affects confidentiality

- Ransomware severely affects availability

- Data tampering affects integrity

Using the maximum value aligns better with real-world risk severity.

This small design decision makes the model more conservative and more realistic.

From Risk Score to Risk Category

After calculating RiskScore, the model categorized risks:

- Low

- Medium

- High

- Critical

This step matters.

Leadership rarely responds to raw numbers.

They respond to thresholds and priorities.

By defining consistent scoring bands, the model ensures:

- Clear escalation paths

- Transparent decision-making

- Easier reporting to stakeholders

Automation removes ambiguity from categorisation.

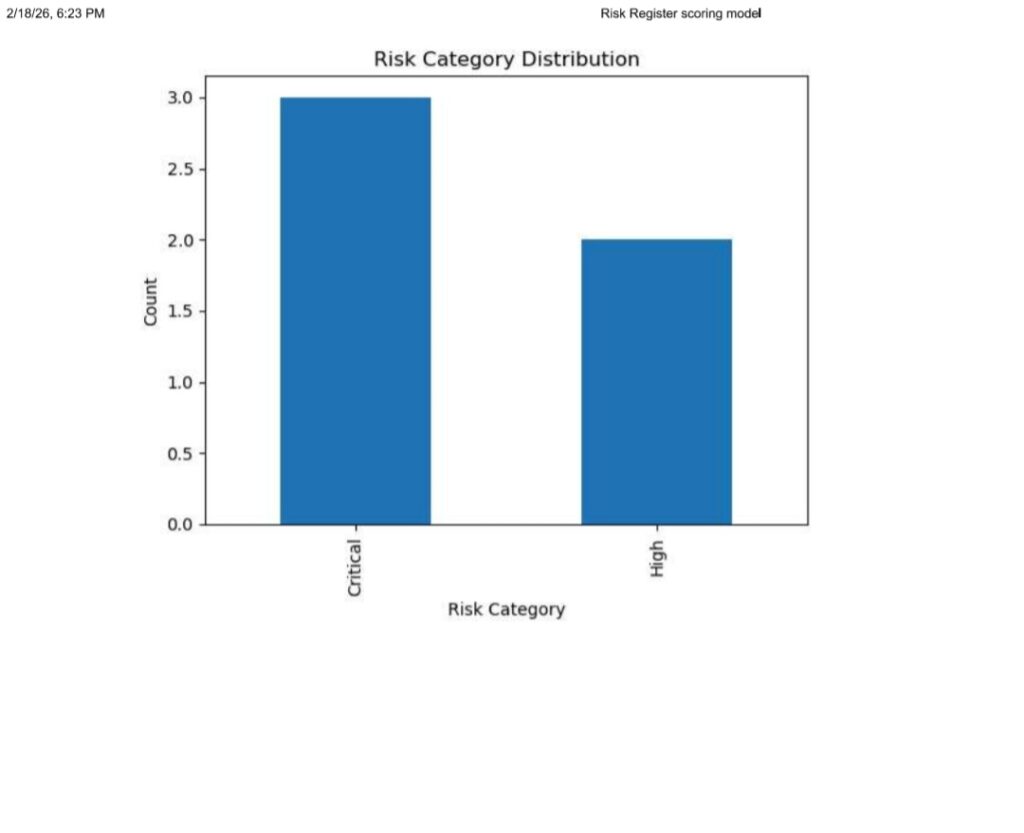

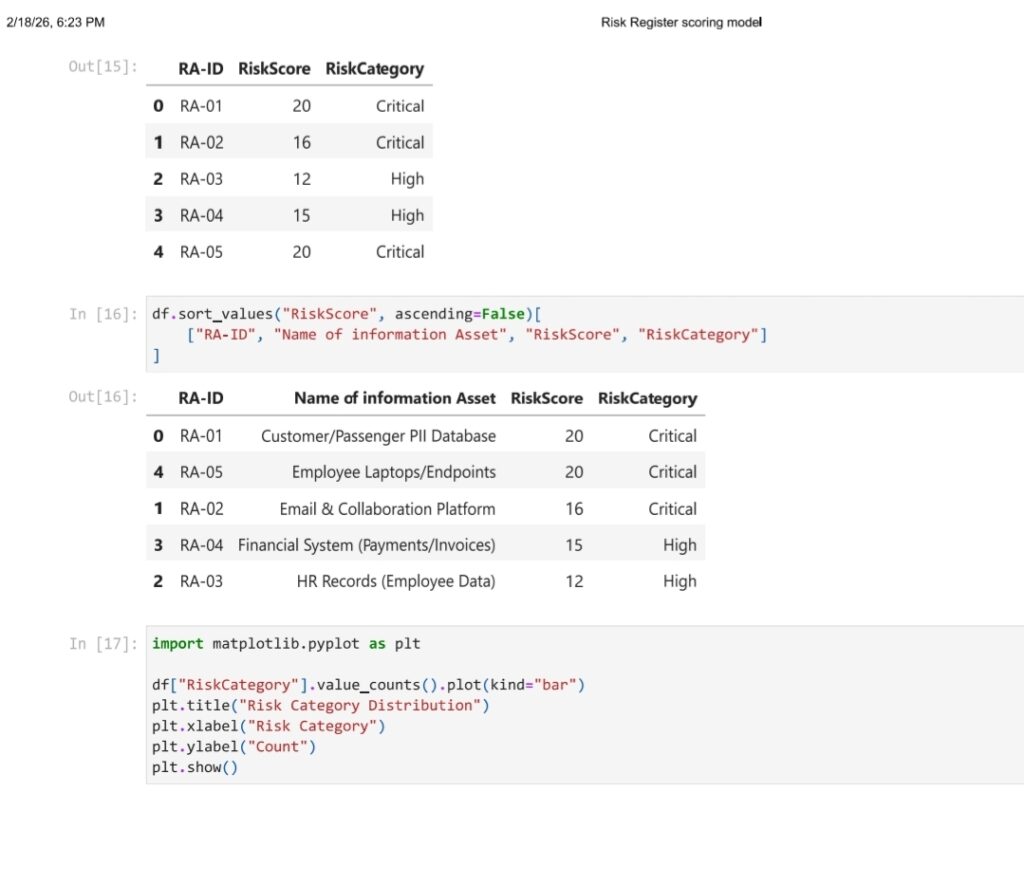

What the Ranked Output Revealed

Once automated and sorted, patterns became clearer.

Assets such as:

- Customer/Passenger PII database

- Employee laptops/endpoints

- Email and collaboration platforms

Scored among the highest risks.

These are common enterprise risk drivers.

The automation did not create new risks.

It revealed them clearly.

That clarity supports strategic decisions:

- Where to invest in controls

- Which assets require immediate treatment

- Where monitoring is sufficient