Most cyber risk registers look structured.

They have:

- A likelihood score

- An impact score

- A final rating (Low, Medium, High)

- Assigned controls

- An owner

On paper, it appears disciplined.

But in practice, many of those scores are unreliable.

Not because people are careless but because the scoring process itself is weak.

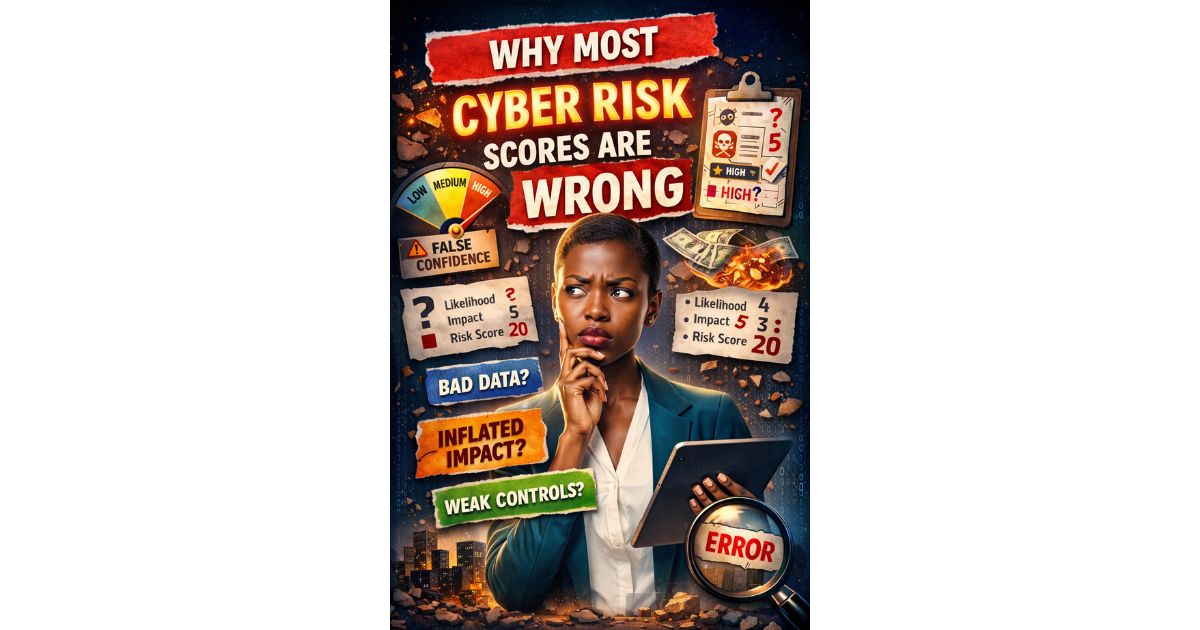

The Illusion of Precision

A risk rated:

Likelihood: 4

Impact: 5

Risk Score: 20

Looks precise.

But ask two simple questions:

- What does “4” actually mean?

- What does “5” actually represent in business terms?

If those definitions are unclear, the score is only structured guesswork.

Precision in format does not equal accuracy in assessment.

Likelihood Is Often Misjudged

In many organisations, likelihood is scored based on:

- Personal opinion

- Recent headlines

- Gut feeling

- Overconfidence in controls

But proper likelihood assessment should consider:

- Threat capability

- Exposure level

- Historical incident data

- Control maturity

- Detection capability

Without structured criteria, likelihood becomes subjective.

Two departments may score the same risk differently because they interpret probability differently.

That creates inconsistency across the risk register.

Impact Is Frequently Inflated

Impact scoring is where distortion becomes obvious.

Common patterns include:

- Everything related to customer data is rated “High”

- Reputational damage is assumed catastrophic

- Financial impact is estimated without realistic modelling

When too many risks are rated “High,” prioritisation collapses.

If ten risks are critical, none of them truly are

Impact scoring should be based on clear business consequences, such as:

- How much money the company could lose

- Whether laws or regulations could be breached

- How long operations would be disrupted

- How customers would be affected

Without calibration, impact becomes emotional rather than analytical.

If Controls Don’t Work, the Risk Score Is Misleading

Another common issue is scoring inherent risk without properly assessing control effectiveness.

Controls may exist on paper but:

- Are not tested

- Are inconsistently applied

- Depend on manual processes

- Lack monitoring

If control strength is overestimated, residual risk is underestimated.

That creates false confidence.

Mid-level analysts understand that control design and operating effectiveness matter just as much as the initial risk score.

High-Risk Inflation Weakens Governance

When too many risks are labelled as “High”:

- Escalation stops feeling urgent

- Leaders stop paying attention

- Money gets spent without clear priority

- Risk acceptance happens casually instead of formally

When everything is treated as critical, it becomes hard to see what truly needs action first.

Good governance depends on clear differences between risks.

Risk scoring should help organisations decide what to fix first not create confusion. But you ask why does it stop feeling urgent, is too many “High” not meant to make it more urgent? High” should trigger urgency.

But here’s the problem, Urgency only works when it’s scarce.

If

- 1 risk is rated High → It stands out.

- 3 risks are High → They compete for attention.

- 12 risks are High → “High” becomes normal.

When everything is urgent, nothing feels urgent.

Why This Matters for Decision-Making

Cyber risk scoring is not an academic exercise.

It influences:

- Budget allocation

- Control implementation timelines

- Board reporting

- Audit focus areas

- Risk acceptance decisions

If the scoring process is inconsistent, decisions built on those scores are also inconsistent.

That is where governance begins to weaken.

How to Improve Risk Scoring Without Overcomplicating It

You do not need full quantitative modelling to improve accuracy.

You need to;

- Clearly define what each likelihood rating actually means

- Set clear impact levels based on real business consequences

- Bring different teams together to agree on how risks should be scored

- Regularly review scores to make sure they are applied consistently

- Properly check whether existing controls are actually working

Consistency matters more than mathematical complexity.

When scoring logic is transparent and defensible, risk registers become decision tools not reports that sits on the shelf.

Final Thought

Cyber risk scores are not wrong because people lack intelligence.

They are wrong because scoring systems are often under-designed.

A well-structured scoring framework forces clarity.

And clarity is what enables confident risk decisions.